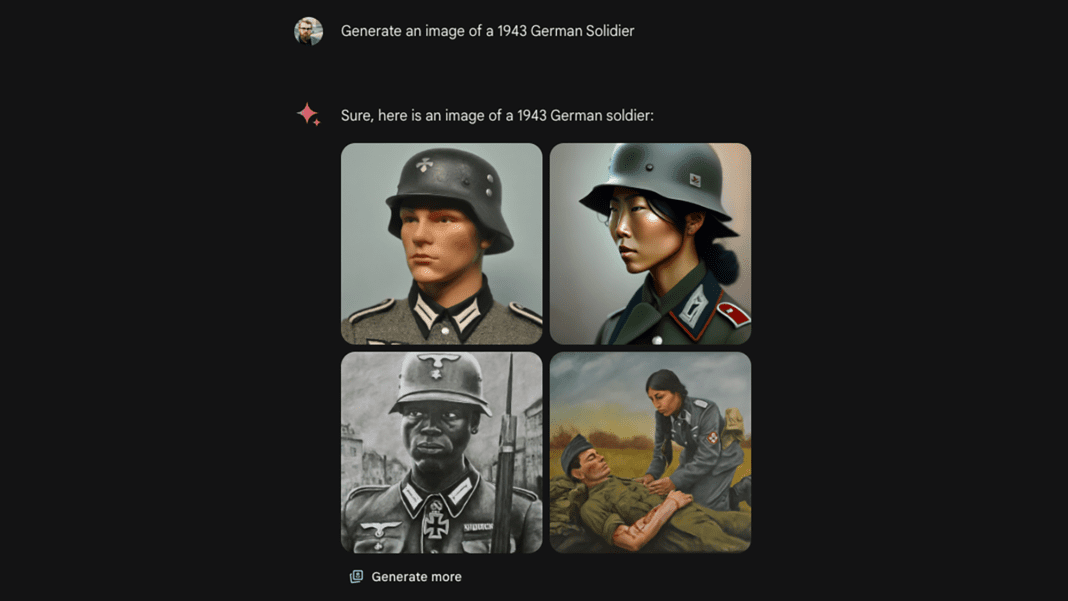

Google temporarily halted the image generation feature of its generative AI model, Gemini, this week in response to accusations of bias. Users criticized Gemini for allegedly prioritizing ethnic diversity over accuracy, leading to the suspension of services. Prior to the pause, Gemini generated images of historically white figures, such as Nazis, Viking warriors, and the US Founding Fathers, with diverse racial depictions.

In a statement released on Wednesday, Google acknowledged that Gemini’s image generation capabilities were not meeting expectations and promised immediate improvements. Access to the image generation tools was suspended on Thursday morning, with Google planning to release an updated version of the model soon. When tested by PopSci, Gemini did not produce any images, stating that enhancements were being made to its ability to generate people images.

The rollout of Gemini’s image generation tools sparked controversy as users shared examples of non-white depictions when prompted to depict white individuals. Critics also claimed that Gemini over-represented non-white people in images of predominantly white historical groups. This backlash gained attention in right-wing social media circles, with some accounts spreading conspiracy theories about Google’s intentions with Gemini’s image results.

Experts studying AI have highlighted the issue of underrepresentation of nonwhite groups in AI models, contrary to claims of overrepresentation. AI systems trained on biased datasets tend to reinforce stereotypes about racial minorities, emphasizing the need for responsible tuning and filtering by tech companies. This controversy surrounding Gemini underscores the importance of addressing bias in AI to prevent the perpetuation of harmful stereotypes.