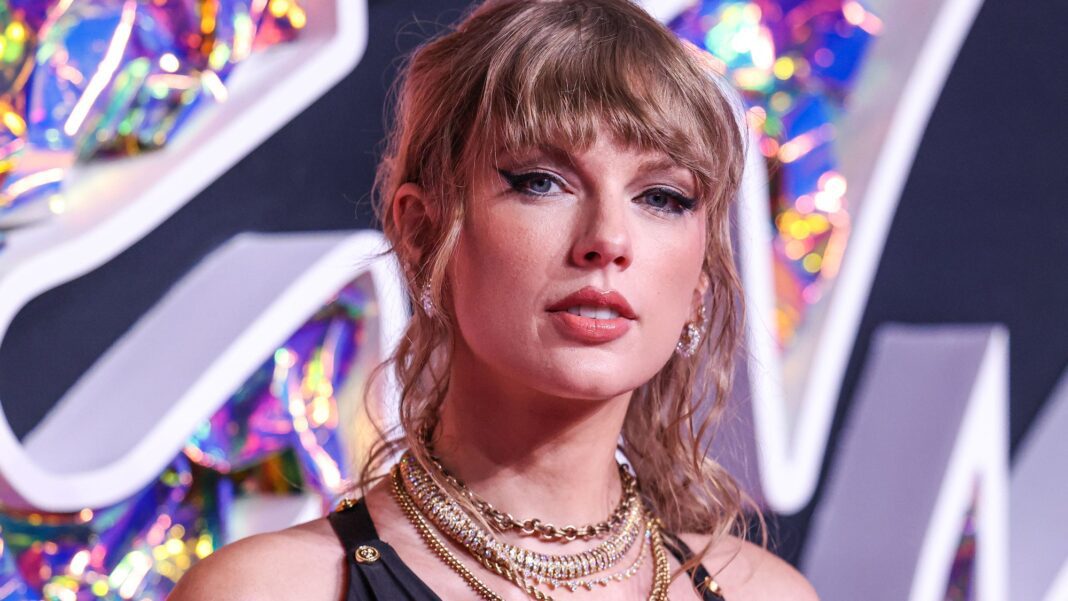

AI-generated images and video depicting singer Taylor Swift engaged in sexual activity surfaced on X, the platform formerly known as Twitter. According to reports, one post of these deepfake images was viewed 45 million times before it was removed. This led to a temporary ban on search results for Taylor Swift’s name on X. The circulation of the AI-generated content sparked a call for new laws criminalizing the spread of deepfake sexual material online.

The AI-generated Swift deepfake material reportedly originated on the message board 4chan, a Telegram channel, and later emerged on X. The flood of deepfake content made it impossible to search for Taylor Swift without encountering these offensive images and videos. At one point, the content even trended, further amplifying its spread. Twitter took 17 hours to remove the offending post despite it violating its terms of service.

On Sunday, X moderators blocked search results for “Taylor Swift” and “Taylor Swift AI” to limit the spread of the deepfakes on the platform. Also, fans of Swift united to post non-sexualized images of the singer using the hashtag #ProtectTaylorSwift to combat the deepfakes while reporting accounts that shared the pornographic material. While the ban on Swift’s name was later lifted, X remains vigilant in monitoring and removing any similar offensive content.

Sexualized deepfakes of celebrities, including Taylor Swift, pose a recurring challenge beyond X.

Read More rnrn