YouTube Enhances Disclosure Requirements for AI-Generated Content

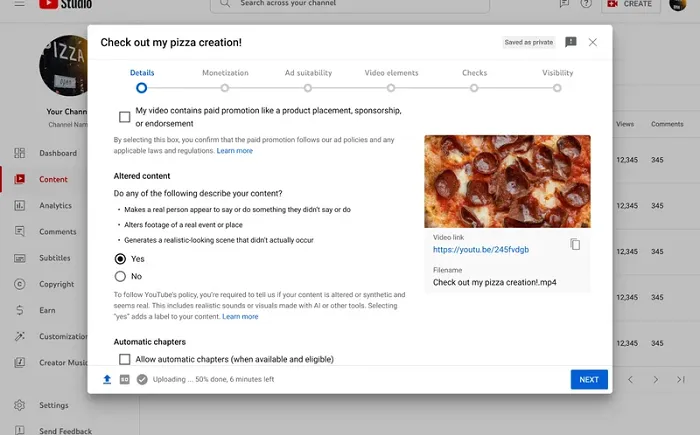

Creators on YouTube will now be required to provide additional information regarding content created with AI tools. The platform has introduced a new feature within Creator Studio that mandates creators to disclose when uploading content that appears realistic but has been generated using AI technology.

When uploading content that has been modified or created synthetically to resemble real footage, creators must check a box to indicate this. This measure aims to prevent the spread of deepfakes and misinformation through manipulated or simulated content.

Once the box is checked, a distinct marker will be displayed on the video clip to notify viewers that the content is not authentic.

YouTube emphasizes the importance of transparency and trust between creators and their audience through the implementation of this new disclosure requirement. Examples of content that necessitate disclosure include the use of realistic human likenesses, altered footage of real events or locations, and the generation of lifelike scenes.

It is important to note that not all uses of AI will require disclosure. While AI-generated scripts and production elements are exempt from this rule, “clearly unrealistic content” such as animations, color adjustments, special effects, and beauty filters do not require the new disclosure.

However, content that has the potential to mislead viewers must be labeled accordingly. YouTube reserves the right to add a disclosure label to content that features synthetic or manipulated media if the creator fails to do so.

This update represents the platform’s ongoing commitment to transparency in AI usage, following the introduction of disclosure requirements for AI implementation last year.

The rising prevalence of AI-generated content raises concerns about the authenticity and potential misuse of such media. Instances of generated images causing confusion and manipulated visuals being used in political campaigns underscore the importance of regulating AI-generated content.

As AI technology advances, detecting synthetic content may become increasingly challenging. Solutions like digital watermarking are being explored to aid in identifying AI-generated content, but loopholes exist, such as re-filming content on a phone to evade detection.

Ensuring compliance with disclosure rules is crucial for platforms to enforce transparency standards. However, as generative AI improves, particularly in video creation, distinguishing between real and synthetic content may become more challenging over time.

While disclosure requirements serve as a temporary measure, ongoing developments in AI technology necessitate continuous adaptation and innovation to address the evolving landscape of AI-generated content.